Table of Contents

Cyber Security Testbeds and Malware Testing

Themes

Among the research topics of the Security Group the main stream of this research topic is to set-up experimental testbeds to check how attack really works and experiment with them.

- TestREx, a TestBeds for Exploit Analysis

- Malwarelab: a testbed for checking whether Exploit Kits really works

- Analysis of Malware as a Software artefact

That seems to be pretty obvious but we would like to find some sort of general approach to test a malware and attack strategies and try to find commonalities.

TestREx (A Testbed for Repeatable Exploits)

In our paper presented at USENIX CSET'14 we have presented TestREx, a testbed for repeatable exploits which can pack and run applications with their environments, inject exploits and monitor their success; and generate security reports. In 2016 the TestREx process has also been the object of a US patent by SAP AG .

The TestREx design was inspired by the following empirical observations: (1) software systems are constantly evolving, thus certain exploits might work only for certain version(s) of the application; (2) the application might be vulnerable only if deployed within a certain software environment. Therefore, apart from having the possibility to run scripted exploits against chosen applications, TestREx is able to answer the following questions:

- Given a software environment E, an exploit X that successfully subverts an application A, that is running on E:

- Will X be successful on an application A running on another environment E'?

- Will X be successful on another version of A, A', running on E?

- Will X be successful on another version of A, A', running on E'?

Instead of creating separate virtual machines for every application/configuration we use the Linux Containers technology that provides virtualization capabilities on the operation system level. Every application or/and corresponding software configuration is a container, which are sandboxed filesystems that reuse the same operating system kernel (these containers have no external access to the operating system where they are actually deployed). In order to manage these containers, TestREx is using the Docker, which provides a format for packing and running applications with a lightweight file repository. We use Docker to implement two types of containers: (1) software-specific that contain operating system, webserver and database engine; (2) application-specific that is build on top of a desired software-specific container and also encapsulates the application files. The figure on the right shows an example Wordpress3.2 application container that has been built on top of the “ubuntu-apache-mysql” software container.

Currently, TestREx supports only one type of exploits - python scripts that consist of metadata and code, that uses the Selenium Webdriver to automate the web browser and execute exploits. We have chosen Selenium since it is able to precisely reproduce attackers' actions through the web browser and has native JavaScript support. As a future work, we plan to extend TestREx exploiting capabilities by creating other classes of exploits.

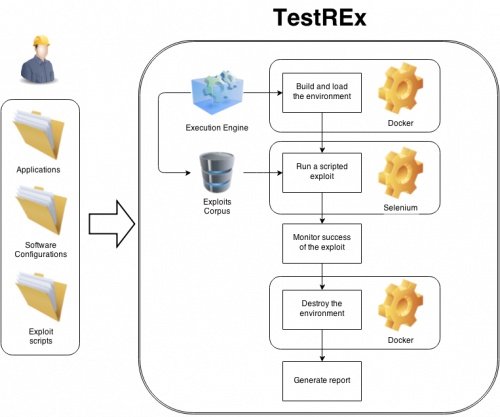

A typical use case of the TestREx is shown on the figure to the right:

- A tester provides the application files, creates an application-specific configuration file (Dockerfile), and a scripted exploit.

- All of the above artifacts must be copied to the appropriate folders within TestREx (targets/applications, targets/configurations, exploits).

- When started, TestREx is using Docker to run the desired application by building and invoking the corresponding application-specific container

- A scripted exploit(s), either selected by a tester or automatically taken from the vulnerability corpus, runs agains the application that is residing within the invoked container.

- TestREx monitors success/failure of the exploit(s), destroys the application-specific container and generates a CSV report.

We also provide a corpus of example applications, taken from related works or implemented by us.

- 83 PHP Vulnerabilities (from BugBox)

- 10 Java Vulnerabilities (from WebGoat)

- 7 Server-side JavaScript Vulnerabilities

The MalwareLab

Exploit Kits are attack tools traded in the black markets for cybercrime that are responsible for hundred of millions of system infections worldwide. We have infiltrated and are currently monitoring among the most important black markets in the cybercrime scenario (the result of the analysis is reported further down). As part of our investigations, we were gathered more than 40 Exploit Kits leaked from the markets. After a thorough technical analysis of their capabilities and characteristics (see "Anatomy of Exploit Kits: Preliminary Analysis of Exploit Kits as Software Artefacts." below), we started testing them.

The goal of this experimental approach presented in our paper at USENIX CSET'13 is to estimate exploit kits capabilities in terms of infection potential and returned value for the attacker.

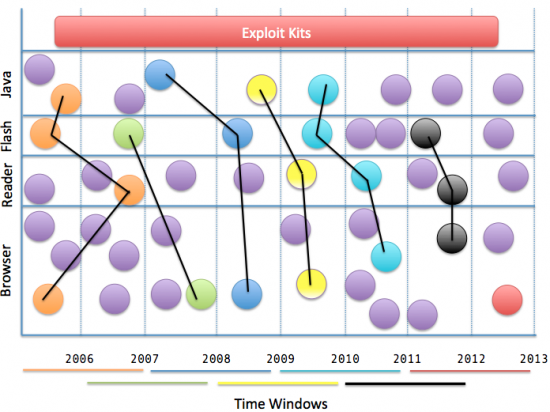

In order to do that we are simulating traffic coming to the Exploit Kits and measuring metrics such as infection rates of kits. To this aim we are randomly generating plausible machine configurations spanning from 2006 to 2013, in moving windows of two years, and testing them against our Exploit Kits. A sample example is given in the Figure at the bottom. Configurations are installed (with windows calculated on release dates) on the following operative systems:

-

- Original

- Service pack 1

- Service pack 2

- Service pack 3

- Windows Vista

- Original

- Service pack 1

- Service pack 2

- Windows 7

- Original

- Service pack 1

The installed software includes:

- Internet Explorer

- Opera Browser

- Firefox

- Java

- (Quicktime)1)

- Flash

- Acrobat Reader

We create 30 random sample of application version per time window, and automatically deploy it on a machine. This way we are creating almost 50.000 system configurations that are then attacked by the Exploit Kits. Upon successful exploitation, our Exploit Kits deliver Casper, our own good-ghost-in-the-browser malware. If Casper is successfully executed on the attacked machine, he pings back our Malware Distribution Server that register the successful exploitation. Overall, our infrastructure keeps record of configurations, instance runs, attacked operative systems, and of course successful and unsuccessful infections.

Here's a very black and malicious picture of the MalwareLab:  .

.

Analysis of Exploit Kits

Over the past couple of years a number of private exploit kit source codes leaked in public. We identified information for more than 70 exploit kits and out of those we were able to successfully download and deploy 33 instances of 24 web malware families (such as Crimepack, Eleonore and Fragus).

In our paper at published in ESSOS'13 we pursued the following goals:

- Study the functional aspects of exploit kits and offer a taxonomy for the routines implemented in them;

- Classify the exploit delivery mechanisms;

- Uncover web crawler evasion techniques that are used by exploit kits.

- Understand the user interface of an exploit kit, find out what data it provides and what management capabilities are available to the customer.

- Investigate the code re-use in various exploit kits and determine if there is a common code base used by malware authors.

- Study the methods of code protection mechanisms that are aimed to prevent unauthorized code distribution and complicate the analysts’ work.

The results of our study are quite surprising. We expected exploit delivery mechanisms to be sophisticated - to work as snipers, performing a careful study of the remote machine and then delivering only the right exploit to the right victim. While the study is performed by most kits, its results are not used in a significant way to select the exploit. Instead the attack is performed in machine-gun style. It seems that the main purpose of victim fingerprinting is to make statistics and “dashboards” of traffic and malware installations. In other words exploit kits’ main target is to “improve the customer experience”. A large number of successfull infections is expected to come by large volumes of traffic instead of sophisticated attacks.

People

The following is a list a people that has been involved in the project at some point in time.

- Luca Allodi (TU Eindhoven)

- Stanislav Dashevskyi

- Daniel Ricardo Dos Santos

- Vadim Kotov (Now at Cylance)

- Antonino Sabetta (SAP AG)

Projects

This activity was supported by a number of project

Publications

- A. Sabetta, L. Compagna, S. Ponta,S. Dashevskyi, D.R. dos Santos, F. Massacci. Multi-context exploit test management. US Patent 20160314302, 2016. https://www.google.com/patents/US20160314302

- S. Dashevskyi, D. Ricardo dos Santos, F. Massacci, A. Sabetta. TestREx: a Testbed for Repeatable Exploits In: Proc. of Usenix Security CSET 2014, San Diego (CA), USA. PDF

- L. Allodi, V. Kotov, F. Massacci. MalwareLab: Experimenting with Cybercrime Attack Tools. In: Proc. of Usenix Security CSET 2013, Washington D.C., USA. PDF

- V. Kotov and F. Massacci: Anatomy of Exploit Kits: Preliminary Analysis of Exploit Kits as Software Artefacts. In Proc. of ESSoS 2013, pp. 181–196 PDF

Talks and Tutorials

- Luca Allodi MalwareLab: Experimentation with Cybercrime Attack Tools. Presentation at Usenix Security CSET 2013. Washington D.C., USA, Aug 2013. Slides

- Vadim Kotov Anatomy of Exploit Kits. ESSoS'13, Paris, France. March 2013. Slides

- Fabio Massacci TestREx: A Testbed for Repeatable Exploits. Presentation at Usenix Security CSET 2014. San Diego, CA, USA, Aug 2014. Slides

Software

- TestREx (a Testbed for Repeatable Exploits): open the link to wiki page to learn more.